Update similaritycal model

Browse files- .gitignore +6 -0

- app.py +24 -18

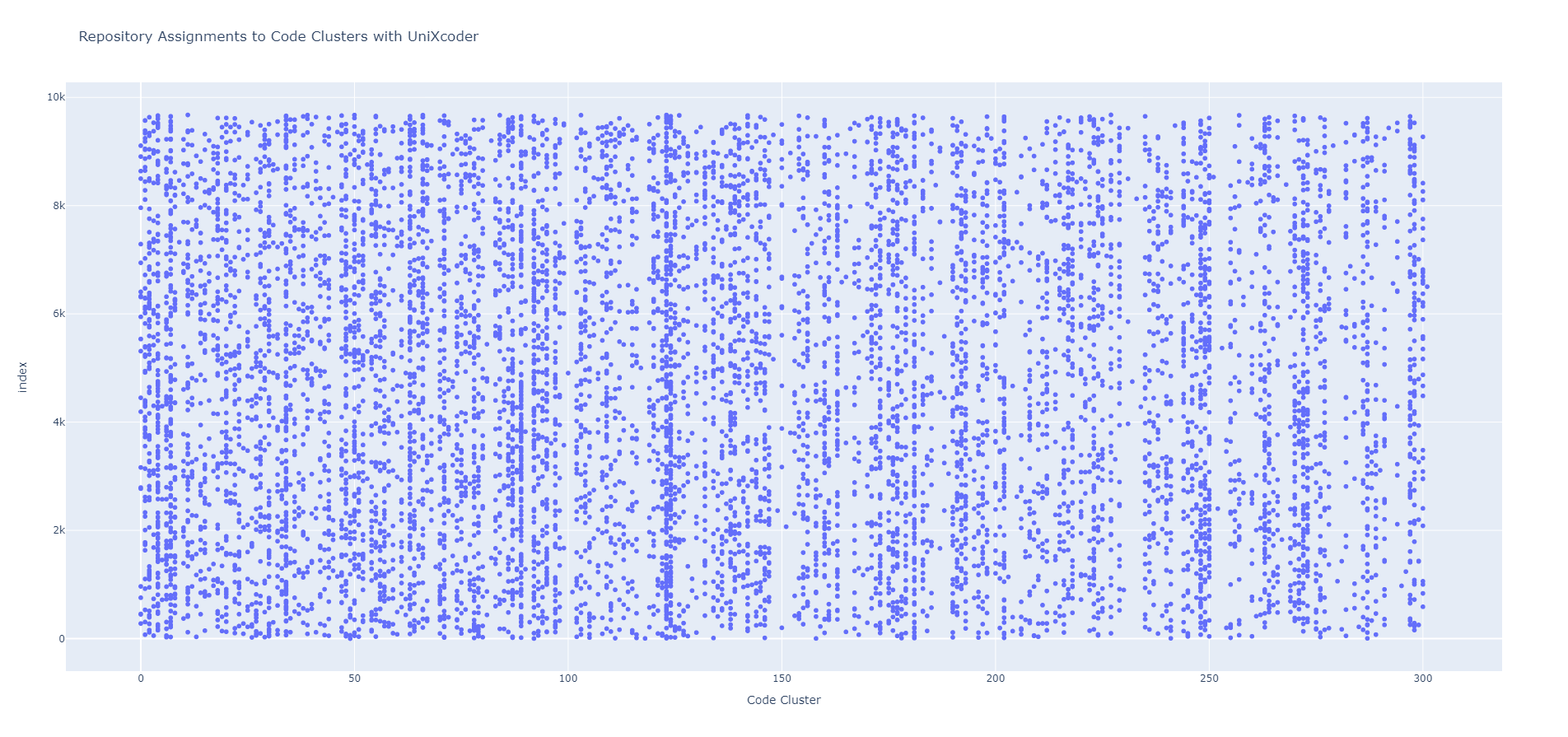

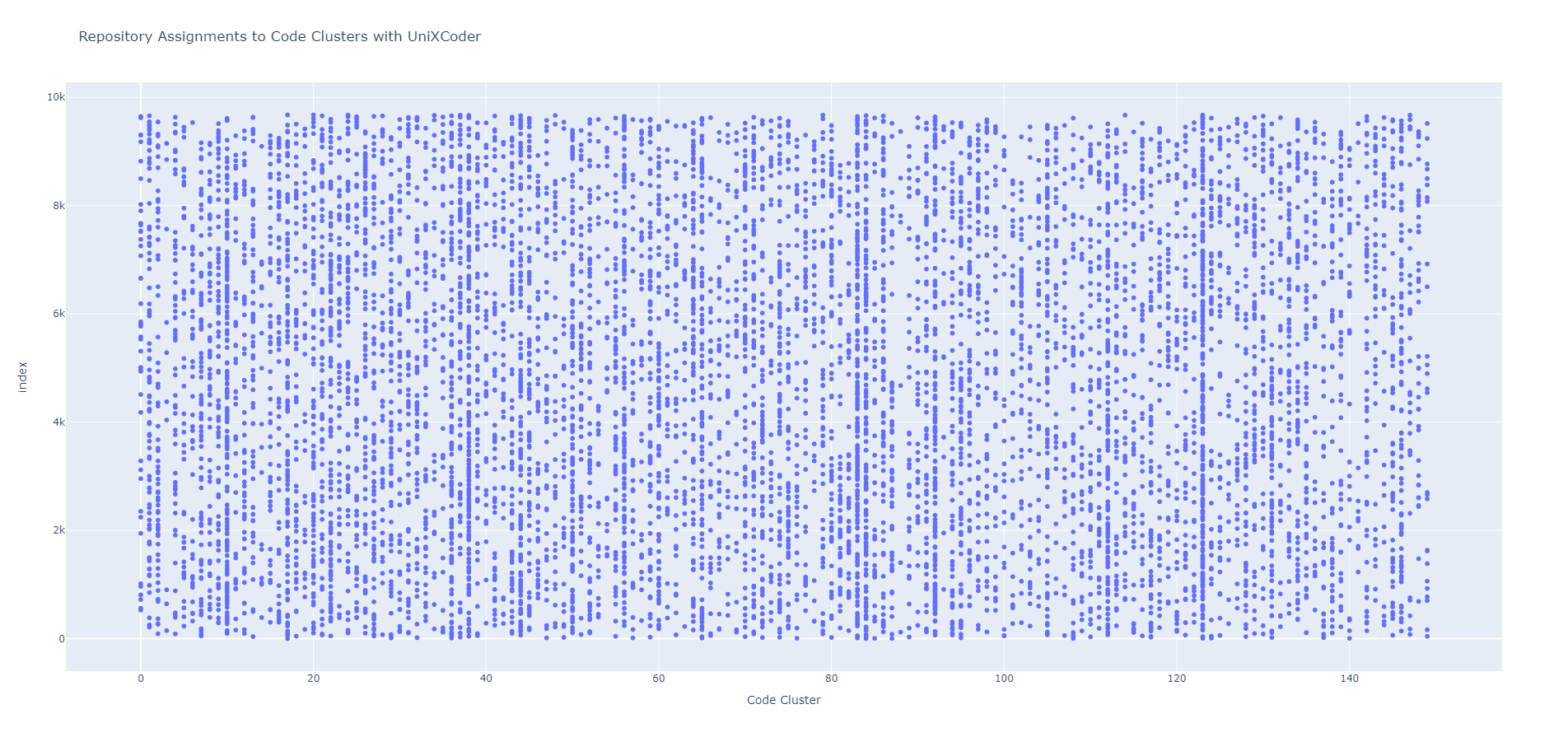

- assets/Repository-Code Cluster Assignments.png +0 -0

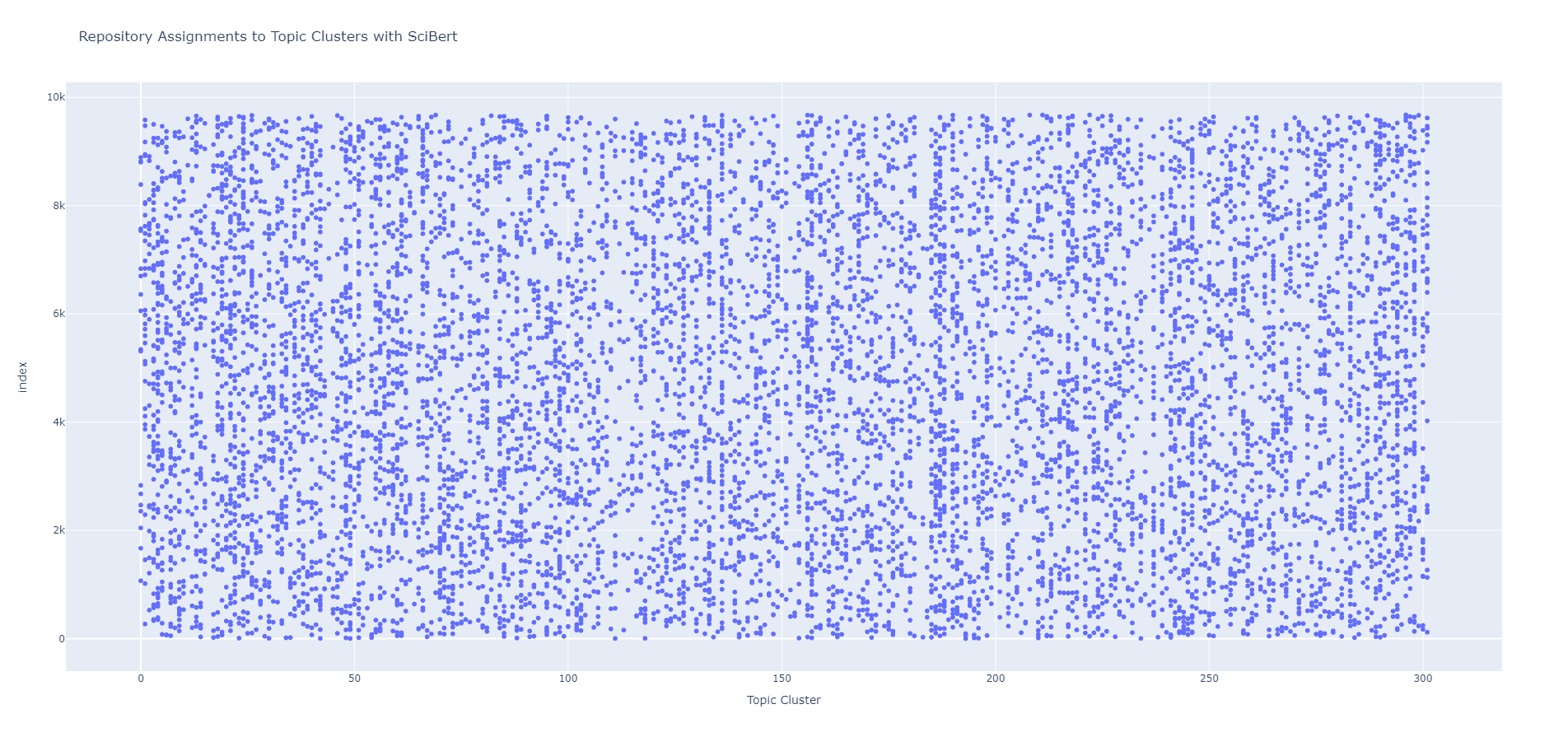

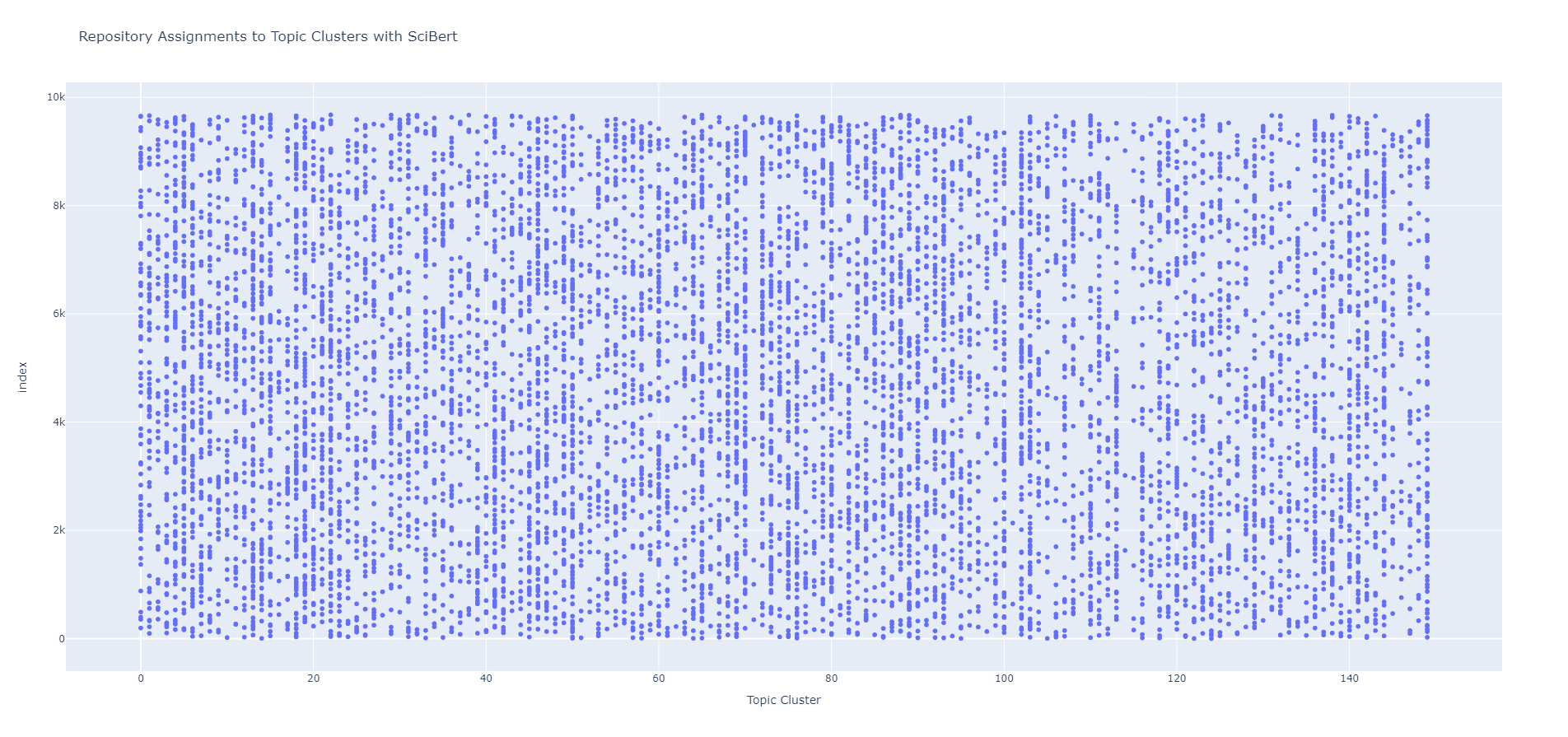

- assets/Repository-Topic Cluster Assignments.png +0 -0

- common/pair_classifier.py +4 -3

- similarityCal/__init__.py +0 -0

- data/SimilarityCal_model_NO1.pt → similarityCal/code.pt +2 -2

- similarityCal/topic.pt +3 -0

- similarityCal/utils.py +169 -0

.gitignore

CHANGED

|

@@ -161,3 +161,9 @@ cython_debug/

|

|

| 161 |

|

| 162 |

# Streamlit configs

|

| 163 |

.streamlit/

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 161 |

|

| 162 |

# Streamlit configs

|

| 163 |

.streamlit/

|

| 164 |

+

|

| 165 |

+

# IDE files

|

| 166 |

+

.idea/

|

| 167 |

+

|

| 168 |

+

# Mac os files

|

| 169 |

+

*.DS_Store

|

app.py

CHANGED

|

@@ -7,21 +7,22 @@ import pandas as pd

|

|

| 7 |

import numpy as np

|

| 8 |

import streamlit as st

|

| 9 |

from pathlib import Path

|

| 10 |

-

from torch import nn

|

| 11 |

from docarray import DocList

|

| 12 |

from docarray.index import InMemoryExactNNIndex

|

| 13 |

from transformers import pipeline

|

| 14 |

from transformers import AutoTokenizer, AutoModel

|

| 15 |

from common.repo_doc import RepoDoc

|

| 16 |

-

from common.pair_classifier import PairClassifier

|

| 17 |

from nltk.stem import WordNetLemmatizer

|

| 18 |

|

|

|

|

|

|

|

| 19 |

nltk.download("wordnet")

|

| 20 |

KMEANS_TOPIC_MODEL_PATH = Path(__file__).parent.joinpath("data/kmeans_model_topic_scibert.pkl")

|

| 21 |

KMEANS_CODE_MODEL_PATH = Path(__file__).parent.joinpath("data/kmeans_model_code_unixcoder.pkl")

|

| 22 |

-

|

| 23 |

SCIBERT_MODEL_PATH = "allenai/scibert_scivocab_uncased"

|

| 24 |

# SCIBERT_MODEL_PATH = Path(__file__).parent.joinpath("data/scibert_scivocab_uncased") # Download locally

|

|

|

|

| 25 |

device = (

|

| 26 |

"cuda"

|

| 27 |

if torch.cuda.is_available()

|

|

@@ -136,16 +137,20 @@ def load_code_kmeans_model():

|

|

| 136 |

|

| 137 |

|

| 138 |

@st.cache_resource(show_spinner="Loading SimilarityCal model...")

|

| 139 |

-

def load_similaritycal_model():

|

| 140 |

"""

|

| 141 |

The function to load SimilarityCal model

|

|

|

|

| 142 |

:return: the SimilarityCal model

|

| 143 |

"""

|

| 144 |

-

|

| 145 |

-

|

| 146 |

-

|

| 147 |

-

|

| 148 |

-

|

|

|

|

|

|

|

|

|

|

| 149 |

return sim_cal_model

|

| 150 |

|

| 151 |

|

|

@@ -247,27 +252,27 @@ def run_similaritycal_search(index, repo_clusters, model, query_doc, query_clust

|

|

| 247 |

:return: result dataframe

|

| 248 |

"""

|

| 249 |

docs = index._docs

|

| 250 |

-

input_embeddings_list = []

|

| 251 |

result_dl = DocList[RepoDoc]()

|

|

|

|

| 252 |

for doc in docs:

|

| 253 |

if query_cluster_number != repo_clusters[doc.name]:

|

| 254 |

continue

|

| 255 |

if doc.name != query_doc.name:

|

| 256 |

e1, e2 = (torch.Tensor(query_doc.repository_embedding),

|

| 257 |

torch.Tensor(doc.repository_embedding))

|

| 258 |

-

|

| 259 |

-

|

| 260 |

result_dl.append(doc)

|

| 261 |

|

| 262 |

-

|

| 263 |

-

|

| 264 |

-

|

| 265 |

-

similarity_scores =

|

| 266 |

df = result_dl.to_dataframe()

|

| 267 |

df["scores"] = similarity_scores

|

| 268 |

|

| 269 |

sorted_df = df.sort_values(by='scores', ascending=False).reset_index(drop=True).head(limit)

|

| 270 |

-

sorted_df["rankings"] = sorted_df["scores"].rank(ascending=False).astype(int)

|

| 271 |

sorted_df.drop(columns="scores", inplace=True)

|

| 272 |

|

| 273 |

return sorted_df

|

|

@@ -283,7 +288,6 @@ if __name__ == "__main__":

|

|

| 283 |

tokenizer, scibert_model = load_scibert_model()

|

| 284 |

topic_kmeans = load_topic_kmeans_model()

|

| 285 |

code_kmeans = load_code_kmeans_model()

|

| 286 |

-

sim_cal_model = load_similaritycal_model()

|

| 287 |

|

| 288 |

# Setting the sidebar

|

| 289 |

with st.sidebar:

|

|

@@ -507,6 +511,7 @@ if __name__ == "__main__":

|

|

| 507 |

|

| 508 |

with code_cluster_tab:

|

| 509 |

if query_doc.repository_embedding is not None:

|

|

|

|

| 510 |

cluster_df = run_similaritycal_search(index, repo_code_clusters, sim_cal_model,

|

| 511 |

query_doc, code_cluster_number, limit)

|

| 512 |

code_cluster_numbers = run_code_cluster_search(repo_code_clusters, cluster_df["name"])

|

|

@@ -519,6 +524,7 @@ if __name__ == "__main__":

|

|

| 519 |

|

| 520 |

with topic_cluster_tab:

|

| 521 |

if query_doc.repository_embedding is not None:

|

|

|

|

| 522 |

cluster_df = run_similaritycal_search(index, repo_topic_clusters, sim_cal_model,

|

| 523 |

query_doc, topic_cluster_number, limit)

|

| 524 |

topic_cluster_numbers = run_topic_cluster_search(repo_topic_clusters, cluster_df["name"])

|

|

|

|

| 7 |

import numpy as np

|

| 8 |

import streamlit as st

|

| 9 |

from pathlib import Path

|

|

|

|

| 10 |

from docarray import DocList

|

| 11 |

from docarray.index import InMemoryExactNNIndex

|

| 12 |

from transformers import pipeline

|

| 13 |

from transformers import AutoTokenizer, AutoModel

|

| 14 |

from common.repo_doc import RepoDoc

|

|

|

|

| 15 |

from nltk.stem import WordNetLemmatizer

|

| 16 |

|

| 17 |

+

from similarityCal.utils import calculate_similarity

|

| 18 |

+

|

| 19 |

nltk.download("wordnet")

|

| 20 |

KMEANS_TOPIC_MODEL_PATH = Path(__file__).parent.joinpath("data/kmeans_model_topic_scibert.pkl")

|

| 21 |

KMEANS_CODE_MODEL_PATH = Path(__file__).parent.joinpath("data/kmeans_model_code_unixcoder.pkl")

|

| 22 |

+

|

| 23 |

SCIBERT_MODEL_PATH = "allenai/scibert_scivocab_uncased"

|

| 24 |

# SCIBERT_MODEL_PATH = Path(__file__).parent.joinpath("data/scibert_scivocab_uncased") # Download locally

|

| 25 |

+

|

| 26 |

device = (

|

| 27 |

"cuda"

|

| 28 |

if torch.cuda.is_available()

|

|

|

|

| 137 |

|

| 138 |

|

| 139 |

@st.cache_resource(show_spinner="Loading SimilarityCal model...")

|

| 140 |

+

def load_similaritycal_model(mode: str):

|

| 141 |

"""

|

| 142 |

The function to load SimilarityCal model

|

| 143 |

+

mode: 'code' or 'topic'

|

| 144 |

:return: the SimilarityCal model

|

| 145 |

"""

|

| 146 |

+

if mode == 'topic':

|

| 147 |

+

sim_cal_model = torch.load('similarityCal/topic.pt')

|

| 148 |

+

elif mode == 'code':

|

| 149 |

+

sim_cal_model = torch.load('similarityCal/code.pt')

|

| 150 |

+

else:

|

| 151 |

+

raise ValueError("parameter 'mode' must be 'code' or 'topic'")

|

| 152 |

+

sim_cal_model.to(device)

|

| 153 |

+

sim_cal_model.eval()

|

| 154 |

return sim_cal_model

|

| 155 |

|

| 156 |

|

|

|

|

| 252 |

:return: result dataframe

|

| 253 |

"""

|

| 254 |

docs = index._docs

|

|

|

|

| 255 |

result_dl = DocList[RepoDoc]()

|

| 256 |

+

e1_list, e2_list = [], []

|

| 257 |

for doc in docs:

|

| 258 |

if query_cluster_number != repo_clusters[doc.name]:

|

| 259 |

continue

|

| 260 |

if doc.name != query_doc.name:

|

| 261 |

e1, e2 = (torch.Tensor(query_doc.repository_embedding),

|

| 262 |

torch.Tensor(doc.repository_embedding))

|

| 263 |

+

e1_list.append(e1)

|

| 264 |

+

e2_list.append(e2)

|

| 265 |

result_dl.append(doc)

|

| 266 |

|

| 267 |

+

e1_list = torch.stack(e1_list).to(device)

|

| 268 |

+

e2_list = torch.stack(e2_list).to(device)

|

| 269 |

+

model.eval()

|

| 270 |

+

similarity_scores = calculate_similarity(model, e1_list, e2_list)[:, 1].cpu().detach().numpy()

|

| 271 |

df = result_dl.to_dataframe()

|

| 272 |

df["scores"] = similarity_scores

|

| 273 |

|

| 274 |

sorted_df = df.sort_values(by='scores', ascending=False).reset_index(drop=True).head(limit)

|

| 275 |

+

sorted_df["rankings"] = sorted_df["scores"].rank(ascending=False, method='first').astype(int)

|

| 276 |

sorted_df.drop(columns="scores", inplace=True)

|

| 277 |

|

| 278 |

return sorted_df

|

|

|

|

| 288 |

tokenizer, scibert_model = load_scibert_model()

|

| 289 |

topic_kmeans = load_topic_kmeans_model()

|

| 290 |

code_kmeans = load_code_kmeans_model()

|

|

|

|

| 291 |

|

| 292 |

# Setting the sidebar

|

| 293 |

with st.sidebar:

|

|

|

|

| 511 |

|

| 512 |

with code_cluster_tab:

|

| 513 |

if query_doc.repository_embedding is not None:

|

| 514 |

+

sim_cal_model = load_similaritycal_model("code")

|

| 515 |

cluster_df = run_similaritycal_search(index, repo_code_clusters, sim_cal_model,

|

| 516 |

query_doc, code_cluster_number, limit)

|

| 517 |

code_cluster_numbers = run_code_cluster_search(repo_code_clusters, cluster_df["name"])

|

|

|

|

| 524 |

|

| 525 |

with topic_cluster_tab:

|

| 526 |

if query_doc.repository_embedding is not None:

|

| 527 |

+

sim_cal_model = load_similaritycal_model("topic")

|

| 528 |

cluster_df = run_similaritycal_search(index, repo_topic_clusters, sim_cal_model,

|

| 529 |

query_doc, topic_cluster_number, limit)

|

| 530 |

topic_cluster_numbers = run_topic_cluster_search(repo_topic_clusters, cluster_df["name"])

|

assets/Repository-Code Cluster Assignments.png

CHANGED

|

|

assets/Repository-Topic Cluster Assignments.png

CHANGED

|

|

common/pair_classifier.py

CHANGED

|

@@ -29,9 +29,10 @@ class PairClassifier(nn.Module):

|

|

| 29 |

nn.Linear(1000, 2),

|

| 30 |

)

|

| 31 |

|

| 32 |

-

def forward(self,

|

| 33 |

-

|

| 34 |

-

|

|

|

|

| 35 |

twins = torch.cat([e1, e2], dim=1)

|

| 36 |

res = self.net(twins)

|

| 37 |

return res

|

|

|

|

| 29 |

nn.Linear(1000, 2),

|

| 30 |

)

|

| 31 |

|

| 32 |

+

def forward(self, data1, data2):

|

| 33 |

+

# modify the logic of loading the data

|

| 34 |

+

e1 = self.encoder(data1)

|

| 35 |

+

e2 = self.encoder(data2)

|

| 36 |

twins = torch.cat([e1, e2], dim=1)

|

| 37 |

res = self.net(twins)

|

| 38 |

return res

|

similarityCal/__init__.py

ADDED

|

File without changes

|

data/SimilarityCal_model_NO1.pt → similarityCal/code.pt

RENAMED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4fca98b665ac3a35db1fa333b21f97d71cda5f2af27229d9e7d93b2fa8696a03

|

| 3 |

+

size 102424453

|

similarityCal/topic.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4b5481fc8c348f1784c29374cde09ad9374ad7c201e33b4748e6153c1ab4c832

|

| 3 |

+

size 102424470

|

similarityCal/utils.py

ADDED

|

@@ -0,0 +1,169 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import json

|

| 2 |

+

import os

|

| 3 |

+

from pathlib import Path

|

| 4 |

+

|

| 5 |

+

import torch

|

| 6 |

+

from docarray.index import InMemoryExactNNIndex

|

| 7 |

+

from common.repo_doc import RepoDoc

|

| 8 |

+

import random

|

| 9 |

+

from torchmetrics.classification import Accuracy, Precision, Recall, F1Score, AUROC

|

| 10 |

+

from tqdm import tqdm

|

| 11 |

+

|

| 12 |

+

INDEX_PATH = Path(__file__).parent.joinpath("..\\data\\")

|

| 13 |

+

TOPIC_CLUSTER_PATH = Path(__file__).parent.joinpath("..\\data\\repo_topic_clusters.json")

|

| 14 |

+

CODE_CLUSTER_PATH = Path(__file__).parent.joinpath("..\\data\\repo_code_clusters.json")

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

def read_repo_cluster(filename):

|

| 18 |

+

# return repo name - cluster id key value pair

|

| 19 |

+

with open(filename, 'r', encoding='utf-8') as file:

|

| 20 |

+

data = json.load(file)

|

| 21 |

+

return data

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

def find_files_in_directory(directory):

|

| 25 |

+

# loop all index files

|

| 26 |

+

files = []

|

| 27 |

+

for file in os.listdir(directory):

|

| 28 |

+

if file[:5] == "index" and file[5] != ".":

|

| 29 |

+

files.append(os.path.join(directory, file))

|

| 30 |

+

return files

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

def read_repo_embedding():

|

| 34 |

+

# return repo name - embedding k-v pair

|

| 35 |

+

map = {}

|

| 36 |

+

for filename in find_files_in_directory(INDEX_PATH):

|

| 37 |

+

data = InMemoryExactNNIndex[RepoDoc](index_file_path=Path(__file__).parent.joinpath(filename))

|

| 38 |

+

docs_tmp = data._docs

|

| 39 |

+

for doc in docs_tmp:

|

| 40 |

+

map[doc.name] = doc.repository_embedding

|

| 41 |

+

return map

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

def build_cluster_repo_embedding(mode: str):

|

| 45 |

+

"""

|

| 46 |

+

build the dataset according to code cluster

|

| 47 |

+

where mode is "code" or "topic"

|

| 48 |

+

"""

|

| 49 |

+

embedding = read_repo_embedding()

|

| 50 |

+

if mode == "code":

|

| 51 |

+

cluster_id = read_repo_cluster(CODE_CLUSTER_PATH)

|

| 52 |

+

elif mode == "topic":

|

| 53 |

+

cluster_id = read_repo_cluster(TOPIC_CLUSTER_PATH)

|

| 54 |

+

else:

|

| 55 |

+

raise ValueError("parameter 'mode' must be 'code' or 'topic'")

|

| 56 |

+

data = []

|

| 57 |

+

for name in embedding:

|

| 58 |

+

data.append({'name': name, 'embedding': embedding[name], 'id': cluster_id[name]})

|

| 59 |

+

return data

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

def build_dataset(data, ratio=0.7):

|

| 63 |

+

"""

|

| 64 |

+

return the train set and test set which are like (index1, index2) : (same, not same)

|

| 65 |

+

"""

|

| 66 |

+

positive_repo = []

|

| 67 |

+

negative_repo = []

|

| 68 |

+

n = len(data)

|

| 69 |

+

# build the binary dataset

|

| 70 |

+

for i in range(n):

|

| 71 |

+

for j in range(i, n):

|

| 72 |

+

if data[i]['id'] == data[j]['id']:

|

| 73 |

+

positive_repo.append((i, j, (1.0, 0.0)))

|

| 74 |

+

positive_repo.append((j, i, (1.0, 0.0)))

|

| 75 |

+

else:

|

| 76 |

+

negative_repo.append((i, j, (0.0, 1.0)))

|

| 77 |

+

negative_repo.append((j, i, (0.0, 1.0)))

|

| 78 |

+

# make balance

|

| 79 |

+

positive_length = len(positive_repo)

|

| 80 |

+

negative_repo = random.choices(negative_repo, k=positive_length)

|

| 81 |

+

# split the dataset

|

| 82 |

+

random.shuffle(positive_repo)

|

| 83 |

+

random.shuffle(negative_repo)

|

| 84 |

+

split_index = int(positive_length * ratio)

|

| 85 |

+

train_set = positive_repo[:split_index] + negative_repo[:split_index]

|

| 86 |

+

random.shuffle(train_set)

|

| 87 |

+

test_set = positive_repo[split_index:] + negative_repo[split_index:]

|

| 88 |

+

random.shuffle(test_set)

|

| 89 |

+

print("Positive data:", len(positive_repo))

|

| 90 |

+

print("Negative data:", len(negative_repo))

|

| 91 |

+

return train_set, test_set

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

def train_epoch(epoch, model, loader, device, criterion, optimizer):

|

| 95 |

+

model.train()

|

| 96 |

+

accuracy = Accuracy(task='binary')

|

| 97 |

+

precision = Precision(task='binary')

|

| 98 |

+

recall = Recall(task='binary')

|

| 99 |

+

f1 = F1Score(task='binary')

|

| 100 |

+

auroc = AUROC(task='binary')

|

| 101 |

+

accuracy.to(device)

|

| 102 |

+

precision.to(device)

|

| 103 |

+

recall.to(device)

|

| 104 |

+

f1.to(device)

|

| 105 |

+

auroc.to(device)

|

| 106 |

+

total_loss = 0

|

| 107 |

+

count = 0

|

| 108 |

+

for repo1, repo2, label in tqdm(loader):

|

| 109 |

+

count += len(label)

|

| 110 |

+

optimizer.zero_grad()

|

| 111 |

+

repo1 = repo1.to(device)

|

| 112 |

+

repo2 = repo2.to(device)

|

| 113 |

+

label = label.to(device)

|

| 114 |

+

pred = model(repo1, repo2)

|

| 115 |

+

|

| 116 |

+

loss = criterion(pred, label)

|

| 117 |

+

loss.backward()

|

| 118 |

+

total_loss += loss.item()

|

| 119 |

+

optimizer.step()

|

| 120 |

+

|

| 121 |

+

accuracy(pred, label)

|

| 122 |

+

precision(pred, label)

|

| 123 |

+

recall(pred, label)

|

| 124 |

+

f1(pred, label)

|

| 125 |

+

auroc(pred, label)

|

| 126 |

+

print("Epoch", epoch, "Train loss:", total_loss / count, "Acc", accuracy.compute().item(), "Precision:",

|

| 127 |

+

precision.compute().item(), "Recall:", recall.compute().item(), "F1:", f1.compute().item(),

|

| 128 |

+

"AUROC:", auroc.compute().item())

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

def evaluate(model, loader, device, criterion):

|

| 132 |

+

model.eval()

|

| 133 |

+

with torch.no_grad():

|

| 134 |

+

test_accuracy = Accuracy(task='binary')

|

| 135 |

+

test_precision = Precision(task='binary')

|

| 136 |

+

test_recall = Recall(task='binary')

|

| 137 |

+

test_f1 = F1Score(task='binary')

|

| 138 |

+

test_auroc = AUROC(task='binary')

|

| 139 |

+

test_accuracy.to(device)

|

| 140 |

+

test_precision.to(device)

|

| 141 |

+

test_recall.to(device)

|

| 142 |

+

test_f1.to(device)

|

| 143 |

+

test_auroc.to(device)

|

| 144 |

+

total_loss = 0

|

| 145 |

+

count = 0

|

| 146 |

+

for repo1, repo2, label in tqdm(loader):

|

| 147 |

+

count += len(label)

|

| 148 |

+

repo1 = repo1.to(device)

|

| 149 |

+

repo2 = repo2.to(device)

|

| 150 |

+

label = label.to(device)

|

| 151 |

+

pred = model(repo1, repo2)

|

| 152 |

+

loss = criterion(pred, label)

|

| 153 |

+

total_loss += loss.item()

|

| 154 |

+

|

| 155 |

+

test_accuracy(pred, label)

|

| 156 |

+

test_precision(pred, label)

|

| 157 |

+

test_recall(pred, label)

|

| 158 |

+

test_f1(pred, label)

|

| 159 |

+

test_auroc(pred, label)

|

| 160 |

+

print("Test loss:", total_loss / count, "Acc", test_accuracy.compute().item(), "Precision:",

|

| 161 |

+

test_precision.compute().item(), "Recall:", test_recall.compute().item(), "F1:", test_f1.compute().item(),

|

| 162 |

+

"AUROC:", test_auroc.compute().item())

|

| 163 |

+

|

| 164 |

+

return test_accuracy.compute().item(), total_loss / count, test_precision.compute().item(), test_recall.compute().item(), \

|

| 165 |

+

test_f1.compute().item(), test_auroc.compute().item()

|

| 166 |

+

|

| 167 |

+

|

| 168 |

+

def calculate_similarity(model, repo_emb1, repo_emb2):

|

| 169 |

+

return torch.nn.functional.softmax(model(repo_emb1, repo_emb2) + model(repo_emb2, repo_emb1), dim=1)

|