chingshuai

commited on

Commit

·

ddc89a5

1

Parent(s):

d9ffb99

增加资源文件

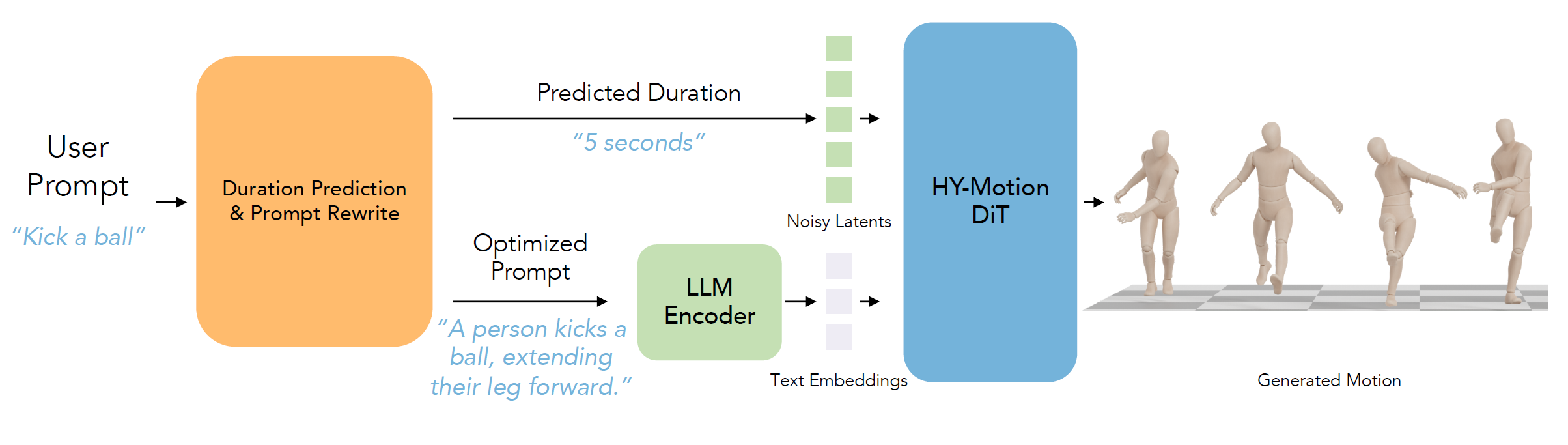

Browse files- assets/arch.png +3 -0

- assets/banner.png +3 -0

- assets/config_simplified.yml +37 -0

- assets/pipeline.png +3 -0

- assets/sotacomp.png +3 -0

- assets/teaser.png +3 -0

- assets/wooden_models/boy_Rigging_smplx_tex.fbx +3 -0

- hymotion/pipeline/motion_diffusion.py +8 -42

assets/arch.png

ADDED

|

Git LFS Details

|

assets/banner.png

ADDED

|

Git LFS Details

|

assets/config_simplified.yml

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

network_module: hymotion/network/hymotion_mmdit.HunyuanMotionMMDiT

|

| 2 |

+

network_module_args:

|

| 3 |

+

apply_rope_to_single_branch: false

|

| 4 |

+

ctxt_input_dim: 4096

|

| 5 |

+

dropout: 0.0

|

| 6 |

+

feat_dim: 1024

|

| 7 |

+

input_dim: 201

|

| 8 |

+

mask_mode: narrowband

|

| 9 |

+

mlp_ratio: 4.0

|

| 10 |

+

num_heads: 16

|

| 11 |

+

num_layers: 18

|

| 12 |

+

time_factor: 1000.0

|

| 13 |

+

vtxt_input_dim: 768

|

| 14 |

+

train_pipeline: hymotion/pipeline/motion_diffusion.MotionFlowMatching

|

| 15 |

+

train_pipeline_args:

|

| 16 |

+

enable_ctxt_null_feat: true

|

| 17 |

+

enable_special_game_feat: true

|

| 18 |

+

infer_noise_scheduler_cfg:

|

| 19 |

+

validation_steps: 50

|

| 20 |

+

losses_cfg:

|

| 21 |

+

recons:

|

| 22 |

+

name: SmoothL1Loss

|

| 23 |

+

weight: 1.0

|

| 24 |

+

noise_scheduler_cfg:

|

| 25 |

+

method: euler

|

| 26 |

+

output_mesh_fps: 30

|

| 27 |

+

random_generator_on_gpu: true

|

| 28 |

+

test_cfg:

|

| 29 |

+

mean_std_dir: ./stats/

|

| 30 |

+

text_guidance_scale: 5.0

|

| 31 |

+

text_encoder_cfg:

|

| 32 |

+

llm_type: qwen3

|

| 33 |

+

max_length_llm: 128

|

| 34 |

+

text_encoder_module: hymotion/network/text_encoders/text_encoder.HYTextModel

|

| 35 |

+

train_cfg:

|

| 36 |

+

cond_mask_prob: 0.1

|

| 37 |

+

train_frames: 360

|

assets/pipeline.png

ADDED

|

Git LFS Details

|

assets/sotacomp.png

ADDED

|

Git LFS Details

|

assets/teaser.png

ADDED

|

Git LFS Details

|

assets/wooden_models/boy_Rigging_smplx_tex.fbx

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4e1a4fc5b121d5fa61a631ee22ba360ca128279d794d1ed75b2acb9486e71cc8

|

| 3 |

+

size 16490768

|

hymotion/pipeline/motion_diffusion.py

CHANGED

|

@@ -36,7 +36,6 @@ def length_to_mask(lengths: Tensor, max_len: int) -> Tensor:

|

|

| 36 |

|

| 37 |

|

| 38 |

def start_end_frame_to_mask(start_frame: Tensor, end_frame: Tensor, max_len: int) -> Tensor:

|

| 39 |

-

# 生成一个 (B, max_len) 的mask,只有在[start_frame, end_frame]区间内为True,其余为False

|

| 40 |

assert (start_frame >= 0).all() and (end_frame >= 0).all(), f"start_frame={start_frame}, end_frame={end_frame}"

|

| 41 |

lengths = end_frame - start_frame + 1

|

| 42 |

assert lengths.max() <= max_len, f"lengths.max()={lengths.max()} > max_len={max_len}"

|

|

@@ -184,6 +183,7 @@ class MotionGeneration(torch.nn.Module):

|

|

| 184 |

if not allow_empty_ckpt:

|

| 185 |

if not os.path.exists(ckpt_name):

|

| 186 |

import warnings

|

|

|

|

| 187 |

warnings.warn(f"Checkpoint {ckpt_name} not found, skipping model loading")

|

| 188 |

else:

|

| 189 |

checkpoint = torch.load(ckpt_name, map_location="cpu", weights_only=False)

|

|

@@ -222,30 +222,6 @@ class MotionGeneration(torch.nn.Module):

|

|

| 222 |

should_apply_smooothing=should_apply_smooothing,

|

| 223 |

)

|

| 224 |

|

| 225 |

-

def _forward_smpl_batch(

|

| 226 |

-

self,

|

| 227 |

-

root_rot6d: Tensor, # (B, L, 1, 6)

|

| 228 |

-

body_rot6d: Tensor, # (B, L, 21, 6)

|

| 229 |

-

transl: Tensor, # (B, L, 3)

|

| 230 |

-

left_hand_pose: Optional[Tensor] = None, # (B, L, 15, 6)

|

| 231 |

-

right_hand_pose: Optional[Tensor] = None, # (B, L, 16, 6)

|

| 232 |

-

) -> Tensor:

|

| 233 |

-

device = transl.device

|

| 234 |

-

bsz, L = transl.shape[:2]

|

| 235 |

-

k3d_all = []

|

| 236 |

-

tmp_betas = torch.zeros(1, 16, device=device)

|

| 237 |

-

for bs in range(bsz):

|

| 238 |

-

out = self.body_model(

|

| 239 |

-

body_rot6d[bs],

|

| 240 |

-

tmp_betas,

|

| 241 |

-

root_rot6d[bs],

|

| 242 |

-

transl[bs],

|

| 243 |

-

left_hand_pose=(left_hand_pose[bs] if left_hand_pose is not None else None),

|

| 244 |

-

right_hand_pose=(right_hand_pose[bs] if right_hand_pose is not None else None),

|

| 245 |

-

)

|

| 246 |

-

k3d_all.append(out.detach().cpu())

|

| 247 |

-

return torch.stack(k3d_all, dim=0) # (B, L, J, 3)

|

| 248 |

-

|

| 249 |

def _decode_o6dp(

|

| 250 |

self,

|

| 251 |

latent_denorm: torch.Tensor,

|

|

@@ -299,32 +275,22 @@ class MotionGeneration(torch.nn.Module):

|

|

| 299 |

transl_smooth = transl_fixed

|

| 300 |

|

| 301 |

if self.body_model is not None:

|

| 302 |

-

print(

|

|

|

|

|

|

|

| 303 |

with torch.no_grad():

|

| 304 |

vertices_all = []

|

| 305 |

k3d_all = []

|

| 306 |

for bs in range(rot6d_smooth.shape[0]):

|

| 307 |

-

out = self.body_model.forward(

|

| 308 |

-

{

|

| 309 |

-

'rot6d': rot6d_smooth[bs],

|

| 310 |

-

'trans': transl_smooth[bs],

|

| 311 |

-

}

|

| 312 |

-

)

|

| 313 |

vertices_all.append(out["vertices"])

|

| 314 |

-

k3d_all.append(out[

|

| 315 |

vertices = torch.stack(vertices_all, dim=0)

|

| 316 |

k3d = torch.stack(k3d_all, dim=0)

|

| 317 |

-

print(f

|

| 318 |

-

# k3d = self._forward_smpl_batch(

|

| 319 |

-

# rot6d_smooth[:, :, 0:1, :].to(device),

|

| 320 |

-

# rot6d_smooth[:, :, 1:22, :].to(device),

|

| 321 |

-

# transl_smooth,

|

| 322 |

-

# left_hand_pose=(rot6d_smooth[:, :, 22:37, :].to(device) if left_hand_pose is not None else None),

|

| 323 |

-

# right_hand_pose=(rot6d_smooth[:, :, 37:52, :].to(device) if right_hand_pose is not None else None),

|

| 324 |

-

# )

|

| 325 |

# align with the ground

|

| 326 |

min_y = vertices[..., 1].amin(dim=(1, 2), keepdim=True) # (B, 1, 1)

|

| 327 |

-

print(f

|

| 328 |

k3d = k3d.clone()

|

| 329 |

k3d[..., 1] -= min_y # (B, L, J) - (B, 1, 1)

|

| 330 |

transl_smooth = transl_smooth.clone()

|

|

|

|

| 36 |

|

| 37 |

|

| 38 |

def start_end_frame_to_mask(start_frame: Tensor, end_frame: Tensor, max_len: int) -> Tensor:

|

|

|

|

| 39 |

assert (start_frame >= 0).all() and (end_frame >= 0).all(), f"start_frame={start_frame}, end_frame={end_frame}"

|

| 40 |

lengths = end_frame - start_frame + 1

|

| 41 |

assert lengths.max() <= max_len, f"lengths.max()={lengths.max()} > max_len={max_len}"

|

|

|

|

| 183 |

if not allow_empty_ckpt:

|

| 184 |

if not os.path.exists(ckpt_name):

|

| 185 |

import warnings

|

| 186 |

+

|

| 187 |

warnings.warn(f"Checkpoint {ckpt_name} not found, skipping model loading")

|

| 188 |

else:

|

| 189 |

checkpoint = torch.load(ckpt_name, map_location="cpu", weights_only=False)

|

|

|

|

| 222 |

should_apply_smooothing=should_apply_smooothing,

|

| 223 |

)

|

| 224 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 225 |

def _decode_o6dp(

|

| 226 |

self,

|

| 227 |

latent_denorm: torch.Tensor,

|

|

|

|

| 275 |

transl_smooth = transl_fixed

|

| 276 |

|

| 277 |

if self.body_model is not None:

|

| 278 |

+

print(

|

| 279 |

+

f"{self.__class__.__name__} rot6d_smooth shape: {rot6d_smooth.shape}, transl_smooth shape: {transl_smooth.shape}"

|

| 280 |

+

)

|

| 281 |

with torch.no_grad():

|

| 282 |

vertices_all = []

|

| 283 |

k3d_all = []

|

| 284 |

for bs in range(rot6d_smooth.shape[0]):

|

| 285 |

+

out = self.body_model.forward({"rot6d": rot6d_smooth[bs], "trans": transl_smooth[bs]})

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 286 |

vertices_all.append(out["vertices"])

|

| 287 |

+

k3d_all.append(out["keypoints3d"])

|

| 288 |

vertices = torch.stack(vertices_all, dim=0)

|

| 289 |

k3d = torch.stack(k3d_all, dim=0)

|

| 290 |

+

print(f"{self.__class__.__name__} vertices shape: {vertices.shape}, k3d shape: {k3d.shape}")

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 291 |

# align with the ground

|

| 292 |

min_y = vertices[..., 1].amin(dim=(1, 2), keepdim=True) # (B, 1, 1)

|

| 293 |

+

print(f"{self.__class__.__name__} min_y: {min_y}")

|

| 294 |

k3d = k3d.clone()

|

| 295 |

k3d[..., 1] -= min_y # (B, L, J) - (B, 1, 1)

|

| 296 |

transl_smooth = transl_smooth.clone()

|