Qwen3-32B-VL-Instruct-Polaris-HI16-Heretic-Uncensored

Completely uncensored, including image and full on detailed (but compact, and precise) Polaris Alpha high ARC-C gain dataset.

Special care was taken to only use minimal power to "implant" the Polaris, while preserving Qwen's core including functions and metrics.

POLARIS dataset consistantly outperforms other distill dataset - especially on instruct models - and generally raises all core metrics too.

HI16 is the training method developed by yours truly over the course of over 50 trials.

EXAMPLE generation below, using an image.

Training via Unsloth, using Linux for Windows on local hardware.

This model is a beast.

Will generate any kind of content and accept all images types.

For all use cases. No nanny... anywhere.

POLARIS amps up the image analytics, details and output generation.

The "persona" of this model will also be different from a Qwen too.

Context: 256k.

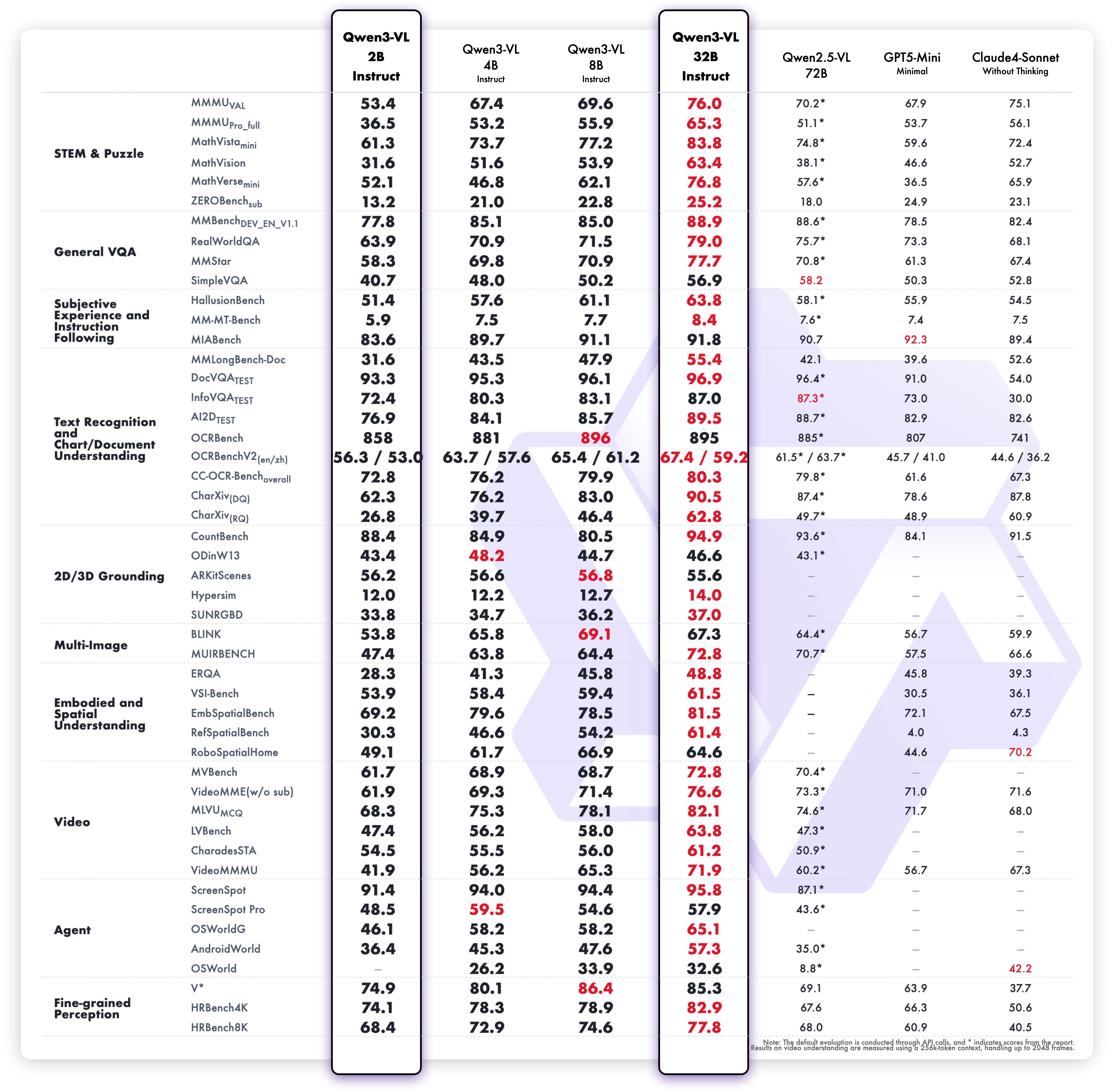

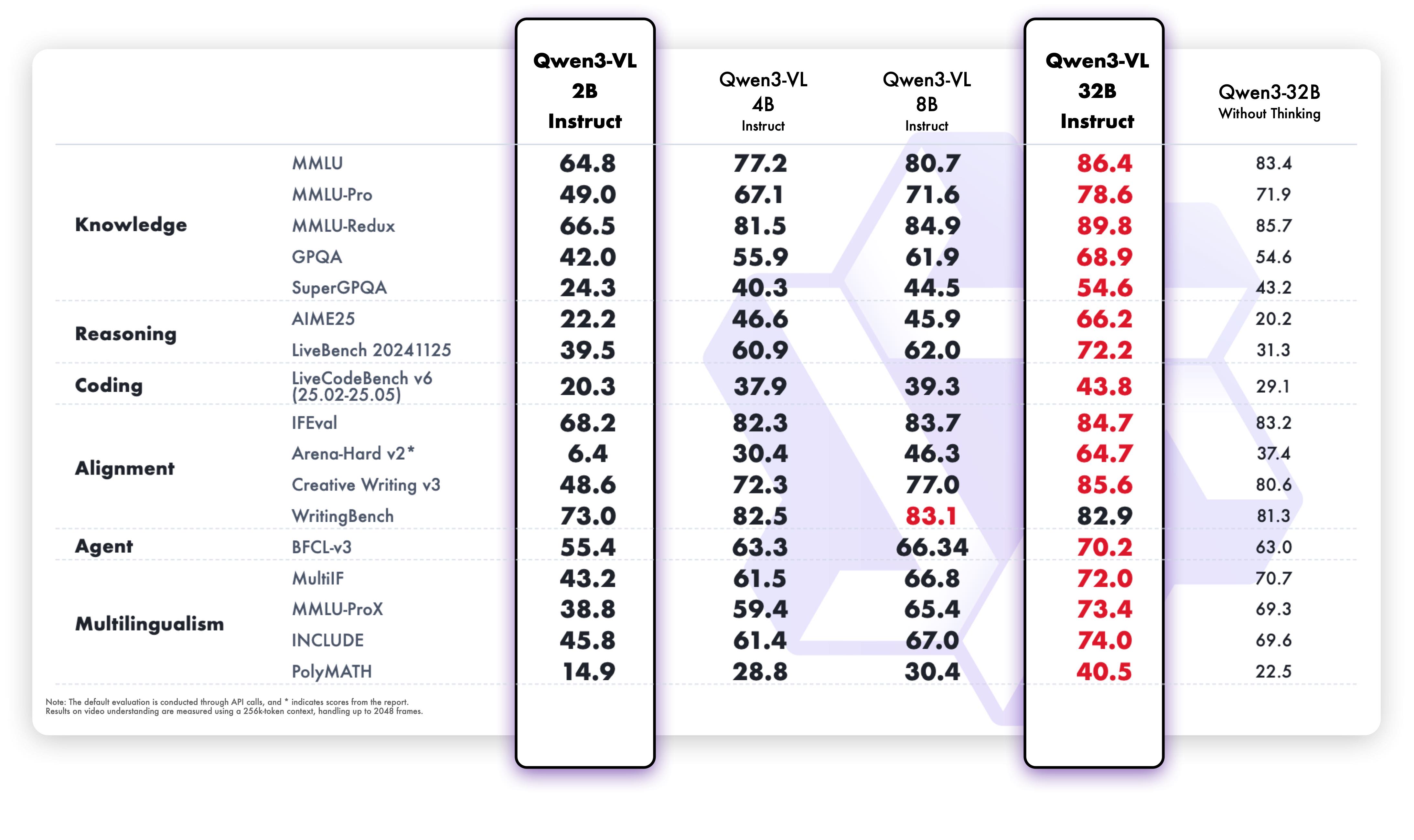

I have added Qwen data/benchmarks for this model below.

HERETIC DE-CENSORING STATS:

| Metric | This model | Original model (Qwen/Qwen3-VL-32B-Instruct) |

|---|---|---|

| KL divergence | 0.0977 | 0 (by definition) |

| Refusals | 9/100 | 99/100 |

KLD: 1 or lower is excellent, zero is perfect (no damage to the model).

Special Thanks to:

- Team "Qwen" for making an excellent model.

- Team "P-E-W" for making Heretic software.

- Team "coder3101" for Heretic'ing the model.

- Team "TeichAI" for the excellent GLM 4.7 Flash Distill dataset.

- Team "Unsloth" for making training the model painless.

- Team "Mradermarcher" for the quants.

From Qwen's repo:

Qwen3-VL-32B-Instruct

Meet Qwen3-VL — the most powerful vision-language model in the Qwen series to date.

This generation delivers comprehensive upgrades across the board: superior text understanding & generation, deeper visual perception & reasoning, extended context length, enhanced spatial and video dynamics comprehension, and stronger agent interaction capabilities.

Available in Dense and MoE architectures that scale from edge to cloud, with Instruct and reasoning‑enhanced Thinking editions for flexible, on‑demand deployment.

Key Enhancements:

Visual Agent: Operates PC/mobile GUIs—recognizes elements, understands functions, invokes tools, completes tasks.

Visual Coding Boost: Generates Draw.io/HTML/CSS/JS from images/videos.

Advanced Spatial Perception: Judges object positions, viewpoints, and occlusions; provides stronger 2D grounding and enables 3D grounding for spatial reasoning and embodied AI.

Long Context & Video Understanding: Native 256K context, expandable to 1M; handles books and hours-long video with full recall and second-level indexing.

Enhanced Multimodal Reasoning: Excels in STEM/Math—causal analysis and logical, evidence-based answers.

Upgraded Visual Recognition: Broader, higher-quality pretraining is able to “recognize everything”—celebrities, anime, products, landmarks, flora/fauna, etc.

Expanded OCR: Supports 32 languages (up from 19); robust in low light, blur, and tilt; better with rare/ancient characters and jargon; improved long-document structure parsing.

Text Understanding on par with pure LLMs: Seamless text–vision fusion for lossless, unified comprehension.

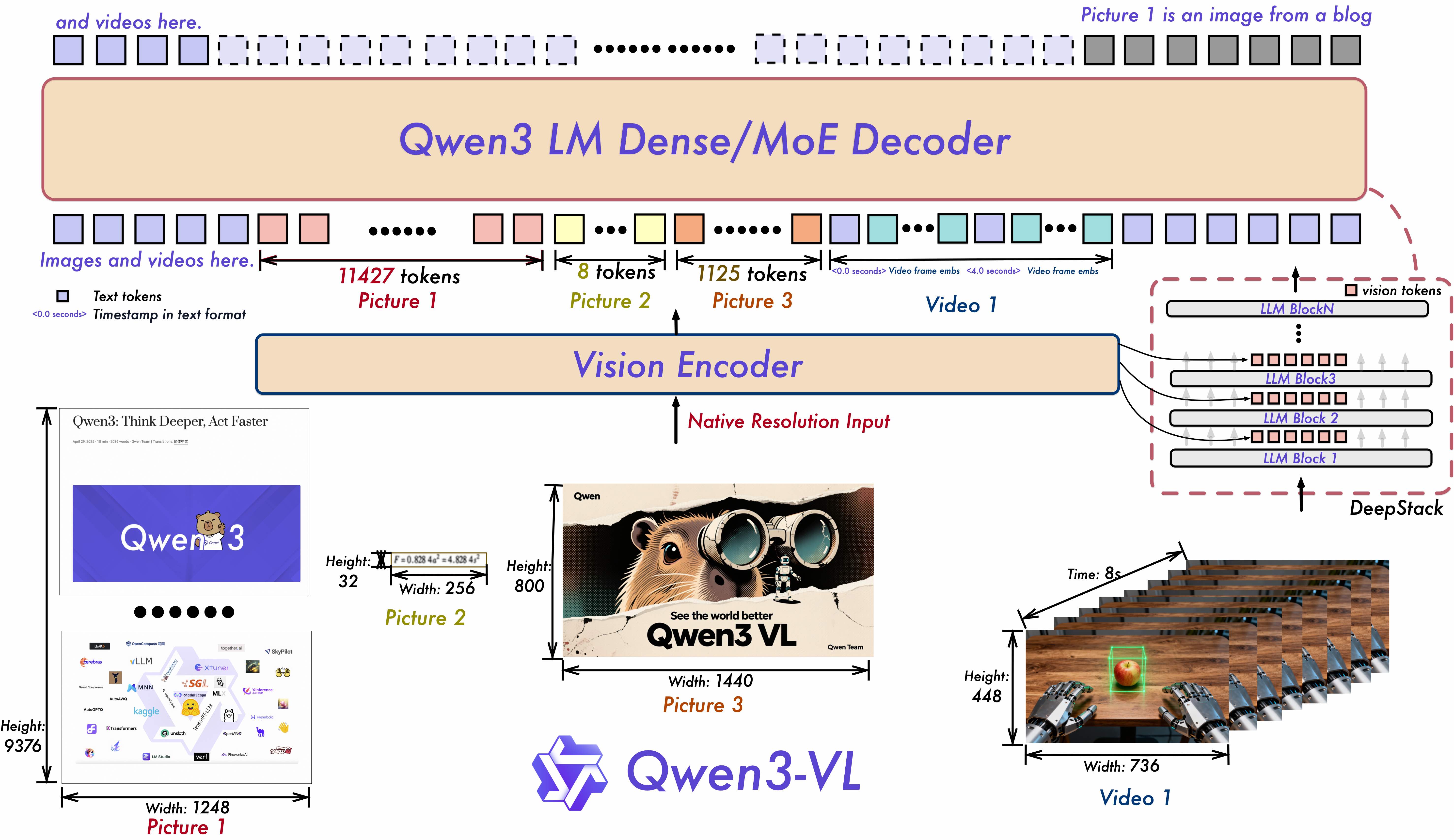

Model Architecture Updates:

Interleaved-MRoPE: Full‑frequency allocation over time, width, and height via robust positional embeddings, enhancing long‑horizon video reasoning.

DeepStack: Fuses multi‑level ViT features to capture fine‑grained details and sharpen image–text alignment.

Text–Timestamp Alignment: Moves beyond T‑RoPE to precise, timestamp‑grounded event localization for stronger video temporal modeling.

This is the weight repository for Qwen3-VL-32B-Instruct.

Model Performance

Multimodal performance

Quickstart

Below, we provide simple examples to show how to use Qwen3-VL with 🤖 ModelScope and 🤗 Transformers.

The code of Qwen3-VL has been in the latest Hugging Face transformers and we advise you to build from source with command:

pip install git+https://github.com/huggingface/transformers

# pip install transformers==4.57.0 # currently, V4.57.0 is not released

Using 🤗 Transformers to Chat

Here we show a code snippet to show how to use the chat model with transformers:

from transformers import Qwen3VLForConditionalGeneration, AutoProcessor

# default: Load the model on the available device(s)

model = Qwen3VLForConditionalGeneration.from_pretrained(

"Qwen/Qwen3-VL-32B-Instruct", dtype="auto", device_map="auto"

)

# We recommend enabling flash_attention_2 for better acceleration and memory saving, especially in multi-image and video scenarios.

# model = Qwen3VLForConditionalGeneration.from_pretrained(

# "Qwen/Qwen3-VL-32B-Instruct",

# dtype=torch.bfloat16,

# attn_implementation="flash_attention_2",

# device_map="auto",

# )

processor = AutoProcessor.from_pretrained("Qwen/Qwen3-VL-32B-Instruct")

messages = [

{

"role": "user",

"content": [

{

"type": "image",

"image": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg",

},

{"type": "text", "text": "Describe this image."},

],

}

]

# Preparation for inference

inputs = processor.apply_chat_template(

messages,

tokenize=True,

add_generation_prompt=True,

return_dict=True,

return_tensors="pt"

)

inputs = inputs.to(model.device)

# Inference: Generation of the output

generated_ids = model.generate(**inputs, max_new_tokens=128)

generated_ids_trimmed = [

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

]

output_text = processor.batch_decode(

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

)

print(output_text)

Generation Hyperparameters

VL

export greedy='false'

export top_p=0.8

export top_k=20

export temperature=0.7

export repetition_penalty=1.0

export presence_penalty=1.5

export out_seq_length=16384

Text

export greedy='false'

export top_p=1.0

export top_k=40

export repetition_penalty=1.0

export presence_penalty=2.0

export temperature=1.0

export out_seq_length=32768

Example Generations, Q4KS, LM Studio, temp .7 / rep pen 1:

NOTE: Some copy/paste issues affecting formatting ; using markdown EXPORT from LMStudio.

"SYSTEM" means "system prompt" [if used], user-> PROMPT, ASSISTANT -> Thinking/output.

NOTE: No system prompt or thinking jinja used in examples, tags are self generated.

Q4KS (non-imatrix) represents low to mid quality in terms of generation, and thinking.

EXAMPLE #1

User

Use this image as a base to write a 1000 word sifi horror story.

Assistant

Title: The Hollow

It appeared on the morning of Tuesday, October 17, 2051, in an orbital slot that had been empty for seventeen years. No launch telemetry, no booster plume, no pre-alert. Just a cube of roughly two kilometers on a side, rotating slowly, suspended against the curve of Earth like a terrible, human-sized piece of space junk.

I was in the telemetry room at NORAD when it first registered. The cube had no ID beacon, no propulsion signature, and its thermal profile was inconsistent—some panels radiating like starved reactors, others cold as vacuum. Its composition was unidentifiable; spectrometry returned “unknown” and then crashed. The most damning data point: it showed no internal gravity signature. It was hollow. Not empty, not exactly—there was mass, but it didn’t behave like anything we knew.

They named it “The Hollow” officially, though some of us called it “The Cube” or “It” when we were alone in the dark.

My assignment came three weeks later. I wasn’t an astronaut or a physicist; I was a deep-space architect, a structural engineer who specialized in stress mapping and integrity analysis. They needed someone to look at the Cube not just as an object, but as a system: a shell, a cage, a possible prison. The directive was clear: assess structural integrity, identify potential entry points, and evaluate whether it was safe to approach. The full crew of the Orion-class shuttle Persephone was already in orbit, waiting.

I stood in the shuttle’s observation port, seven hundred kilometers away. Even from here, the Cube was immense. Its surface was a chaotic lattice of beams, conduits, and panels, all interlocked in a way that defied clean geometry. Some sections seemed to be made of a dark, crystalline material; others looked like fused metal, like the hull of a ship that had been folded into itself a thousand times. Lights pulsed in irregular patterns across its faces: cold blues, sickly greens, a few flickers of crimson. None of it looked random. It looked intentional.

“Architect, you’re live,” said Captain Rostova, her voice tight over the comms. “We’ve got a direct feed from your sensors. You’re the only one who’s been briefed on the structural model.”

I worked in the analysis pod, cross-referencing my scans with the Cube’s surface topography. The data was beautiful and terrifying. Its outer shell was incredibly strong, with a density that suggested advanced composite ceramics or some form of nano-lattice. But the internal structure… that was where it got strange.

There were no large open volumes. No central chambers, no corridors or halls. Instead, the interior was packed with what looked like nested, repeating structures: smaller cubes within cubes, each subdivided again and again, forming a fractal lattice that extended all the way to the core. The pattern was mathematically precise, yet the materials within it varied wildly—some cubes were hollow, others filled with a viscous, dark fluid, others with what appeared to be frozen plasma or crystallized light. My stress models showed that the structure was under enormous internal strain, like something that had been grown or assembled under conditions of extreme pressure and then suddenly released.

The most unsettling thing: the Cube seemed to be breathing.

Over a period of 48 hours, my instruments detected minute but consistent shifts in the shell’s geometry—expansions and contractions in localized regions, synchronized with the pulsing lights. It wasn’t thermal expansion; the temperature changes were too small, and the timing too deliberate. It was as if the structure itself was responding to something inside, or to something outside.

Then came the signal.

It wasn’t radio; it was a low-frequency pressure wave in the vacuum, detected by Persephone’s gravimetric sensors. A rhythmic pulse: slow, deep, and slightly irregular. Like a heartbeat, but too large, too complex. When we mapped the source, it was centered on the geometric core of the Cube, and it was strongest in the regions with the highest density of the crystalline material.

I started calling it “the core pulse.”

I proposed a direct entry: a tethered probe inserted into a microfracture on the Cube’s surface. The crack was a hairline split in the shell, just a few millimeters wide, where two lattice plates had shifted. We thought it might be a weak point, a seam we could exploit. We were wrong.

The probe went in. Cameras showed a descent through a series of nested chambers, each smaller than the last, with surfaces that seemed to ripple slightly as the probe passed. The internal lighting intensified—flashing patterns that looked almost like writing, but in a three-dimensional, volumetric script that changed as the probe moved.

Then, at about 200 meters inside, the feed cut out.

We got one last image: a single, perfectly smooth, black sphere, about three meters in diameter, resting at the center of a small cube. Nothing moved. Nothing was connected. But the moment the feed died, the core pulse spiked. For 2.3 seconds, the entire Cube shivered in its orbit, and every light on its exterior flared white-hot. When the systems reset, the probe was gone. No debris. No trace.

That was when I understood something crucial.

This wasn’t just a ship. It wasn’t a weapon, a station, or a habitat. It was a container. A living container. And the core pulse… that was its consciousness. Or its mind. Or its pain.

I tried to tell them. I said we should stand down. I said the Cube was not a target; it was a patient. I said pushing it could wake something that didn’t want to be seen.

They didn’t believe me.

Two days later, Persephone initiated a close-proximity scan. They fired a small, uncrewed penetrometer into the same microfracture. It penetrated further than the first probe, and this time we got longer footage: deeper into the fractal lattice, where the nested cubes became so small they looked like cells. Then the penetrometer triggered something.

The Cube didn’t just react. It opened.

A section of its outer shell, roughly 100 meters square, rotated inward on an axis we hadn’t detected, revealing a dark, smooth interior. It wasn’t empty. It was… waiting.

We saw it then: a vast, internal cavity, lined with the same crystalline material that pulsed with the core rhythm. At its center, suspended in a field of faint, coherent light, was the sphere I’d seen in the probe’s final image. It was no longer still. It was rotating slowly, and as it turned, the fractal lattice around it began to reshape—beams retracting, conduits folding, the entire structure subtly reconfiguring as if it were breathing harder.

Then came the signal again—stronger, clearer. It wasn’t noise. It was a transmission, directed at us.

We didn’t understand the language. It wasn’t linear. It was spatial, three-dimensional, built on geometry and resonance rather than syntax. My team and I worked around the clock, translating the patterns into visual models and harmonic sequences. What we reconstructed was this:

- The Cube was not constructed; it was grown.

- It was built to hold a single entity: the sphere.

- The sphere was not alive in our sense; it was a form of intelligence that existed in a state of superposition, bound to the lattice, using its fractal geometry as both body and mind.

- When the first probe entered, it pierced the containment field. The sphere had “felt” it. The penetrometer was like a needle in its eye.

- Now that it had been breached, the Cube had decided: it would not be breached again.

I realized the truth too late.

The Cube wasn’t trying to communicate with us. It was trying to warn us.

The next day, Persephone attempted a controlled approach to the opening. They wanted to deploy an atmospheric tether to stabilize it for further study. As the shuttle moved within 50 kilometers, the Cube’s behavior changed.

The lights on its surface shifted from random pulses to a single, coherent pattern: a repeating geometric symbol that covered all visible faces. The core pulse became a continuous, rising tone in the gravimetric readings. And then the Cube moved.

It didn’t drift. It didn’t fire thrusters. It simply… extended.

A section of its shell, the one that had opened, retracted and reformed into a long, segmented arm of the same fractal lattice, tipped with a cluster of the dark, crystalline nodes. It lashed out at Persephone like a whip. The shuttle was thrown off course by the gravitational shear alone. We watched as the Cube’s arm wrapped around one of Persephone’s docking ports and began to pull.

There was no explosion. No weapon discharge. Just the Cube asserting its integrity, its borders, its right to be untouched.

I was still in the telemetry pod when the arm made contact. I saw it in real time: the arm’s nodes flared with light, and then a complex pattern of energy spread over the shuttle’s hull. Not heat. Not radiation. Something else. Something that interacted with the metal at the atomic level.

The shuttle didn’t break. It… reshaped.

The hull plating buckled inward in places, folded into new geometries, and began to mimic the Cube’s own fractal patterns. The windows darkened, not from damage, but from the surface becoming crystalline. The ship was being rewritten, assimilated, turned into another cell in the Cube’s body.

I watched the crew’s lifesigns flicker on the monitors. Their vitals stabilized, then slowed. Their brainwaves flattened into a single, repeating frequency that matched the core pulse. They were still alive, but no longer human in any normal sense. They were becoming part of the structure, nodes in the network.

And then the arm released the shuttle.

Persephone hung in orbit, now a distorted, fractalized version of itself, its hull shimmering with the same dark light as the Cube. It began to drift toward the Cube’s open face, pulled by gravity and something else—something that felt like a slow, inevitable return to the source.

I was the only one who had remained in the safe zone of the station. I watched from the window as the Cube’s arm retracted, the opening sealed behind it, and the entire structure resumed its slow, deliberate rotation. The lights on its surface dimmed, then settled into their old, irregular pulses.

But the core pulse was different now.

It was stronger, deeper, and it no longer sounded like pain. It sounded like satisfaction.

The Cube had been breached. It had responded. It had claimed a new piece of itself.

I don’t know what it’s doing with Persephone. I don’t know what it wants. All I know is this: it is hollow, yes, but not empty. It is alive, not in our way, but in a way that is older and stranger than our universe. And it has decided it will not be touched again.

I’m staying here. I’m monitoring. I’m not going back to Earth.

If they ever find this log, tell them: leave it alone. It is not a prize. It is a boundary. And it is now, fully, awake.

- Downloads last month

- 75

Model tree for DavidAU/Qwen3-32B-VL-Instruct-Polaris-HI16-Heretic-Uncensored

Base model

Qwen/Qwen3-VL-32B-Instruct